Using an AI coding agent is simple. Using them in your team is not simple at all.

At our company we’re using coding agents a lot. But what we actually need is for us to work together.

Ugh, My AI has such a bad memory. Every time we start a task, it’s forgotten about it. No, I’m not saying things have slipped its mind. Totally forgotten. Like the project never existed.

I’m the CEO and CTO of a tiny software company. The idea was that I stopped coding and started running the company like an adult.

But then AI happened and I was hooked.

Within our company, we’ve been using AI since the betas became available. First for simple admin tasks, but increasingly in our production processes. To the point where using AI coding agents is commonplace and expected.

Despite it’s minimal memory span, AI has diminished lead time so dramatically, it’s changed the very fabric of our thinking about what it means to initiate a feature. Or a project.

Still, the AI coding agent remains far removed from the project board. Project management is still about managing humans, not agents

We know AI can build a new website faster than you can clap your hands. But as creatives say, we don’t want AI to take over our work, we want it to do the dishes and mow the lawn.

Reading about how big companies treat AI in the software workplace gives the impression that they’re firing expensive, mouthy seniors, leaving cheap throwaway juniors to handle coding agents. I don’t know how true that is, but we’re doing the opposite. We like critique, especially from the brilliant people we have on board.

Anyway, from this premise comes the idea of a very highly automated Django Web Studio, our company, where AI coding agents do most of the grunt work, freeing up time for the thinking, ethical and empathetic humans to do the real hard stuff.

This is going to be a big task, and today I want to focus on a tiny, but critical piece of the puzzle.

You see, My AI is so fast. It’s so easy to do a proof-of-concept, to build whole marketing websites in a flash. And that’s all fine, but what we need is AI to do the menial tasks as well.

To do tickets. Just like everyone.

AI coding agents could finally do the tasks we let lie because of time constraints. But now the constraint is not fixing the bugs, it’s the human in the loop.

Wouldn’t it be great if an AI coding agent could handle the tickets themselves?

Let’s look at what that would entail.

So what does an AI need to be capable of, to handle tickets?

Well, first off, to read them.

Our company uses Jira, and we’ve long made use of their API for all sorts of useful tasks. Such as for our weekly reports to our clients, where AI rewrites ticket descriptions into understandable language for laypeople.

Myself, I have a python project that creates worklogs from the spreadsheet I’ve been using for the last fifteen years to log my time. It’s class-based, can do basic CRUD operations.

Perfect for reading tickets.

Obviously we’d need the agent to have access to all of the projects. I have them all on my local, but you could just as well spin up a server for it.

Then you’d define global rules for the AI, explaining which Jira project key to use for each project. What the path is to follow. Which virtual environment to enter, or, for frontend, which version of node.

You’d also need a way to tell the agent how to respond to commands, like ‘work on ticket x’.

Assuming the agent got it right the first time, you’d need to enable the agent to commit and push the changes to a dedicated branch, create a pull request, create a comment for the ticket, and set the ticket’s status to “ready to test”, of course after setting status to “developing” when starting work.

Wow. This is assuming a lot. You can trust the agent to test, but can you really trust the outcome? You’ve had the agent create a dedicated branch, but then you merge yourself? Then test manually anyway? Isn’t that defeating the purpose?

Yes, CD/CI you might say, but many of the projects we run for clients don’t have the necessary coverage because, well, clients tend to focus on features they can see and sell. We’re changing our business model in part to cope with that, how exactly is a story for another time.

Even so, we consider bringing changes into production without human intervention is a bridge too far right now.

We’re designing a system that leverages both the speed of AI and the trust that ‘humans in the loop’ brings.

But let’s start with something practical. Let’s design a system where the AI coding agent handles bugs. What would that look like, building upon the set of tools and services our company uses at the moment?

We use Sentry for bug tracking. We run some pretty complex projects, some of them hundreds of thousands of lines of code, many hundreds of features. As a consequence, there’s always something going wrong. Critical issues come up on our monitoring, so it’s never “site down”. They’re generally relatively minor edge case occurrences.

If an issue reaches a certain threshold, Sentry sends us a message. We enter that into our daily standup, if it looks serious enough to act upon, our PMs create a ticket.

Let’s say we have an N+ issue, too many database queries, which can happen under specific circumstances.

Actually, very much like a traditional development cycle. Only, look, no (hardly any) humans!Traditional software development gives the ticket to a human developer, who assesses the issue in the light of their insight into the codebase and the overall goals of the application. The human developer studies the ticket, studies the codebase, comes up with a solution. Add “select related” to the query.

An AI coding agent has a tremendous advantage above a human project manager in that it can read the code of the project before creating the ticket.

The coding agent reads the incoming message and creates a ticket. Having analysed the codebase while creating the ticket, it has also come up with a solution.

Alright, now we have a clear-cut case, where the addition of a “select related” would resolve the issue. Simple, yes?

But who will know it’s simple? The project manager? No. Our PM might know about the goals of the project, but not about the nitty gritty details of the implementation. And how a change might affect usability.

Wait, can’t our coding agent do that too? It’s already scanned the codebase to create the ticket, and added a document where not only the technical details are listed, but also the broader rationale behind the application.

Sure. Let the agent do the ticket. We’ll add a user to our Jira: agent1. The project manager assigns the ticket to the user, our agent is polling for new assignments every minute, and adds it to its queue.

Aaaand it’s done.

The AI adds a comment to the ticket, sets the status to “ready to test”.

What’s the “definition of done”? That the issue doesn’t appear in Sentry anymore. And who’s looking at Sentry in that kind of detail? Especially if it’s indeed an issue that appears under certain circumstances. Traditionally, we trust a human developer. Are we to trust our coding agent now?

Thing is, traditional software moves SO much slower, AI powered coding agents SO much faster, now the human in the loop is the impediment.

Let’s create an AI powered logging system that automatically creates tickets and resolves them, while keeping humans in the loop.

Why would we need sentry at all?

I want to paint a picture for you.

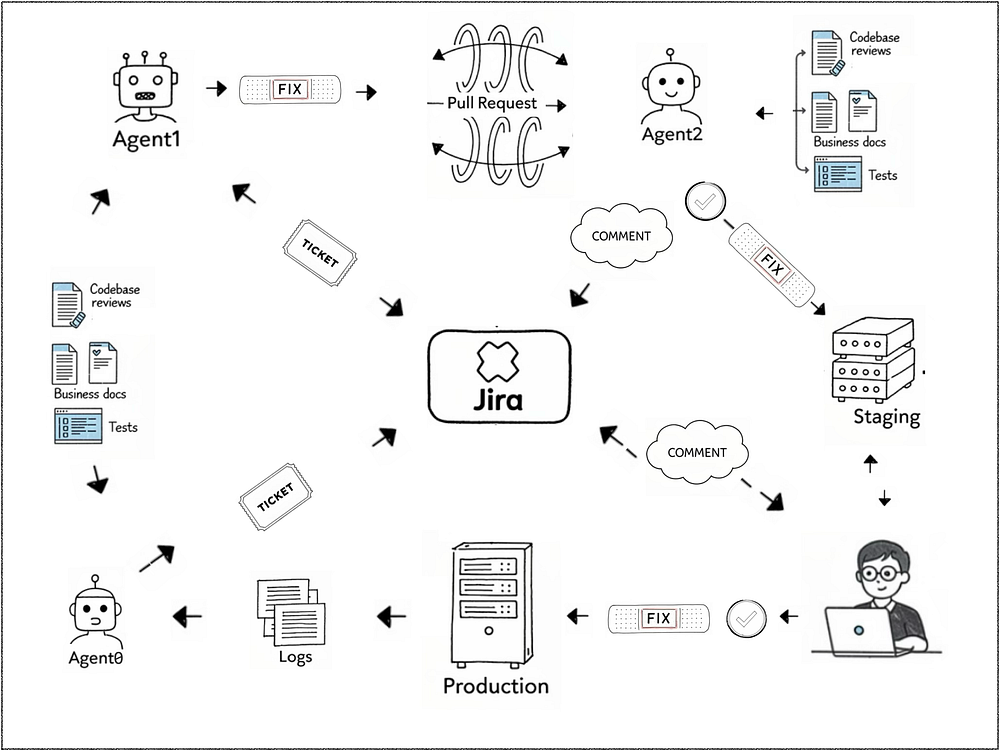

1️⃣ We have a coding agent on our servers, they have access to logs 24/7.

2️⃣ We’ll create a priority system, have a scheduled worker issue a prompt every x to review the logs.

3️⃣ Now, the N+ issue comes around again, meets the threshold, and so AI creates the ticket. In so doing, informs humans that something is amiss, but “I’m on it”.

4️⃣ The agent would fix the issue, create a pull request, deploy to staging. At that point a human would review, and either deploy to production themselves or have the agent do it.

It’s still the human impediment, the agent could also fix directly on production but then we have the trust issue again.

If we’re keeping humans around because they deliver values other humans hold dearly, why are we bogging them down with reviews of one fix after the other. They end up mowing the lawn after all.

Right. It’s true, they have better things to do than reviewing PRs.

So, what’s a review? It’s the four eyes principle: the idea being that the reviewer has a fresh look at the issue or feature. Has not been through the struggle of trying different ways to come to a solution, perhaps leading to tunnel vision, not seeing every angle. Says, look, have you tried this?

Can we replicate that? Is a second agent going to be able to be the second pair of eyes?

Humans assess if a fix aligns with user experience or business goals. But most importantly, they’re responsible. Humans sign off on code quality and business impact. That’s something our coding agents can’t achieve.

Nevertheless, many fixes or minor features would have a limited impact. If we’re to leverage the speed with which we can respond to issues, such as those that affect users, or maybe a better example, the speed at which cybersecurity threats are exploited by nefarious actors using coding agents, we can’t sit there waiting until a human has time to review.

This is how automated review would work:

The agent, lets call it Agent0, scans logs (Django, application, or infrastructure) for anomalies, errors, or performance issues. For example, finds that our N+ error spikes for UserProfileView.

The agent creates a Jira ticket with:

Technical details: Stack trace, affected code, proposed fix. Add select_related(‘author’)

Context: This query causes a 500ms delay under load.

Status: “In Progress” (assigned to agent1).

Ready to get to work:

Agent1 fixes the issue and creates a PR, assigning it to agent2. Part of the fix is to replicate the circumstances under which the N+ issue occurs. This is added to the applications test suite, and added to the ticket to enable project managers to test manually if they so wish.

Agent2 comes in, does their own analysis of the codebase, reads documents related to the business behind the project that we’ve created. Then proceeds to assess the fix in the light of all information available. And of course run all tests. Approves the PR, or comes up with improvements, which are placed in the comments section for AIs and humans to read.

Back to Agent1. Fixes, sends back to reviewer. If we get into a loop at this point we iterate 5 times before jumping out and contacting a human.

PR accepted, merged to a dev branch. We’ll automatically deploy to staging at this point.

Now the project manager can step in to activate deployment to production. They can either accept the changes or test manually using the instructions in the ticket. Deployment is done automatically, which includes general health checks. But also tests specific to the fix, which are again created by a coding agent.

Results are reported back by adding comments to the original ticket. The ticket is then set to done.

We think we’ll have the best of both worlds in this scenario, the speed of AI while keeping the oversight of humans.

The problem is however, that the AI we’re all working with now is not up to the task of working without the help of developers.

Right now, our AI coding agents are in constant need of guidance. No way they can complete a task by themselves.

Before 1900, motorcars were considered toys and were notoriously unreliable. Tyres could go flat once or twice a day during a single all-day trip, and engines frequently failed. Often, travelling by car required a chauffeur/mechanic.

By the 1930s, gasoline-powered cars were considered “fairly reliable”. However, “reliable” meant a car might only last 20,000 to 30,000 miles before needing major repairs like an engine overhaul. Even in the 70s a vehicle rarely lived beyond 100,000.

It took until now, more than a century after introduction, to reach the stage where cars very rarely break down, need very little maintenance, and easily reach 200,000 on the odometer. EV’s are predicted to do even better.

Our AI coding agents are much the same. They need constant guidance by a senior developer to be useful. Our N+ bug is simple enough, but many are not. My AI often got stuck in a loop trying to find a syntax error just a few months ago, luckily that has passed. But other issues are still equally elusive, but simple for humans. Think tiny issues where a quick look in web inspector can put a broken interface right. Or AI insisting that an endpoint exists when it’s clearly the wrong one.

Given the ongoing problems with AI we’re not going to be able to trust it to work independently any time soon. And that’s only one of the many issues.

Being one of the team is more than being able to do the job.

Yesterday I happened to be stuck behind a rubbish truck on a narrow road so I couldn’t pass. The truck had a driver I couldn’t see, and two guys at the back. I looked on in utter amazement as they worked in perfect tandem, grinning and gesturing at each other as they collected the wheelie bins from the side of the road, feeding them into the machinery of the truck, then jumping on the running board like perfect ballet dancers while the truck surged on towards the next stop.

Teamwork and joy. That team has it in spades. Our AI: zero.

It’s said that current AI is all neocortex. A neural network is indeed modelled on that part of the human brain that is responsible for language, logic, and reasoning. The neocortex is a miracle of pattern recognition, but it’s useless without the guidance of what’s called the old brain: a giant set of rules encoded into it through eons of evolution. All our instincts and emotions are old brain features. Teamwork is old brain.

We’re so far still from empathetic AI that will pat you on the back when you’re feeling down, and take over for you. Whatever the pundits say.

We’re still trying to figure out how AI agents can help best. Like a team of horses pulling a cart, we need to know what they’re doing. They need to know what each of the others is doing.

Early on in our history, humans discovered that some animals were more prone to helping us than others. The wolf became the dog, we the pack it thought to guard. And cats, well, cats is another story.

But horses turned out to be particularly useful, intelligent and empathetic. To ride, and to pull loads. Would easily work together, in pairs, as a trioka, as a tandem, four-in-hand, or even eight horse hitch. Some might be wheelers, might be members of the lead team, the swing team, the point team.

Working together is a little more difficult for humans. We have platforms like Jira to prioritise tasks and coordinate each other. But if AI agents are going to be truly useful in the workplace, we’re going to need to figure out how they fit in our team.

I recently read an article by Steve Klabnik about a system called Gas Town, which orchestrates coding agents in order for them to work together to find and fix bugs, and do other programming tasks.

The “town” part of the system is, I gather, from the names the internals of the system are given. There’s the mayor, your lead agent, there’s the town, which is your set of projects.

But that’s as far as I get, because after that “rigs” appear, oh yes, building cranes, the town is perpetually under construction, there’s crew members, ok, but polecats? Hooks? And why “Gas” Town?

Anyway.

I bet thousands of companies are thinking how to make AI useful, not only to individual developers, but useful to a software development team as a whole.

Just like we are doing, they’ll be looking to their specific workflow, which is informed by their specific set of projects and the businesses behind those projects.

And just like us, they’ll be navigating the manifold constraints of current AI.

Current AI is all neocortex, there’s no old brain drive that tells them to value team spirit. Were they horses, we could teach them to ride point, or to lead. They’d read human facial expressions, identify emotions. In a team, they'd be known to form deep, long-lasting bonds.

But our AI is nothing like our long-time friend. We’ve had thousands of years of experience together, time enough to get to know each other through and through.

With AI, we’ve just gotten started. But we’re learning.