We used Django-Taggit to power our job platform. How is tagging a precursor to the Neural Networks that power our AI?

Ten years ago, we built an application that has tagging as a core feature. Was it ahead of its time?

We’re a small company that builds custom software solutions for businesses with a unique approach to a certain product. Or in this case a unique approach to marketing that product. I should mention that we built the application more than ten years ago, way before AI went mainstream

At the core of the solution is tagging.

Many products use tagging, it’s the process of adding attributes to a certain entity, for example to add named attributes to images in a content management system so as to find them later or prevent duplicates.

In this case, we created what we called a hierarchical tag: a model which can be named and then categorised with multiple attributes before being attached to an entity. Rather than adding attributes to the entity itself, using hierarchical tags allows a greater level of complexity, and allows easy comparisons of entities.

Looking around me for an example, the blanket lying on the chair next to me is checkered red, blue, and two shades of green, made of a wool / synthetic mix, it’s crochet, made by my ex who gave it to me for the cold winter nights.

We would create a hierarchical tag that contained fixed choices for colour, material, pattern, technique, origin and many more

If we attach the tag to a blanket, and then to another blanket, we can pretty accurately compare them.

From philosophers we learn that we humans are categorisation engines.

Tagging is similar to how we humans perceive things in the world. We don’t need much to distinguish one thing from the other, in fact, we can literally see things from a mile away. When the object of interest is just a blur.

Understanding the nature of an object from a distance is crucial to our survival. And we’ve been thinking about this faculty for thousands of years.

In 300 BC Aristotle said that knowledge comes from observation and abstraction. AI engineers understand him in 2025 AD.

I perceive an individual object, this particular blanket lying on this particular chair and I abstract its essential features (wool, crochet) to form general concepts and categories.

The eighteenth century philosopher Immanuel Kant drew up an intricate and intriguing system of categories that has percolated into the world of engineering. For instance, pioneers of AI such as Marvin Minsky had a background in philosophy.

Kant brought the ideas of Aristotle a step further, introducing the concept of a priori categories: we humans, and by extension all animals, don’t just passively receive sensory data, but actively categorise it. Space and time, cause and effect, they don’t really exist “out there”, they’re features that we impose. We’re basically born with a categorisation framework.

Early work on Symbolic AI built on ideas of Kant and others. From the fifties to the eighties, AI was the research into and the building of so-called expert systems, where rules and categories, so tags, where hand coded. Much like we create our hierarchical tags in our application.

Why manually tagging expert systems were the place to start with AI but not the place where it ended up.

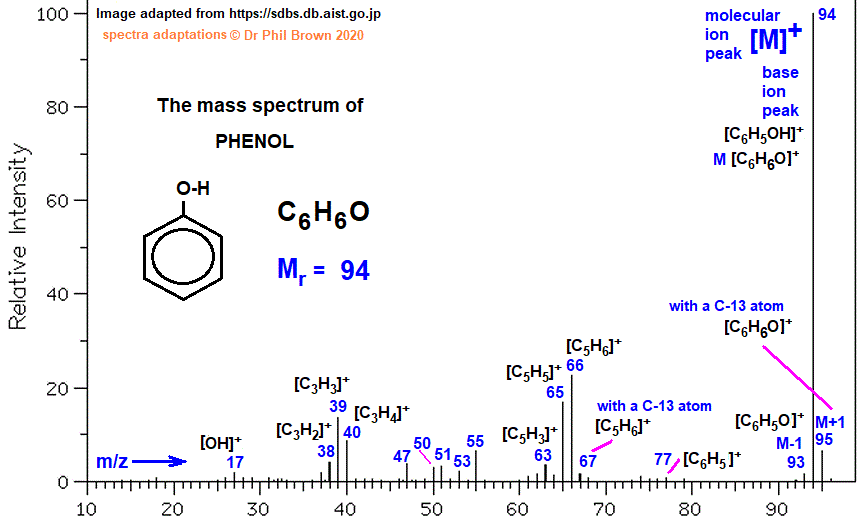

A perfect example is DENDRAL, an expert system for organic chemical mass spectrometry which was used from the sixties to the eighties. Its goal was to infer molecular structures. It encoded the rules and heuristics that chemists use to interpret mass spectrometry data.

IF the mass spectrum has a peak at 77 AND a peak at 91

THEN the molecule likely contains a phenyl group (C6H5).

But of course this wasn’t actually how the rules were formatted within the expert system. DENDRAL was written in Lisp, which stands for List Processing. It’s a programming language custom made for the AI of its time, and as the name says, it’s optimised for processing lists.

A simplified example for the phenyl group rule would be this:

;; Define a function to check for a phenyl group

(defun has-phenyl-group (mass-spectrum &optional (tolerance 0.5))

"Check if the mass spectrum indicates a phenyl group (peaks at 77 and 91)."

(let ((has-77 nil)

(has-91 nil))

;; Check for a peak near 77

(dolist (peak mass-spectrum)

(when (<= (abs (- (car peak) 77)) tolerance)

(setf has-77 t)))

;; Check for a peak near 91

(dolist (peak mass-spectrum)

(when (<= (abs (- (car peak) 91)) tolerance)

(setf has-91 t)))

;; Return result

(and has-77 has-91)))

;; Example mass spectrum: a list of (mass . intensity) pairs

(setf my-spectrum '((77 . 1000) (91 . 800) (43 . 500) (55 . 300)))

;; Evaluate the rule

(if (has-phenyl-group my-spectrum)

(format t "The molecule likely contains a phenyl group (C6H5).~%")

(format t "No phenyl group detected.~%"))Where this dummy function is specialised toward one specific group of chemicals. In fact, the real function was more generic, probably looking more like this:

;; Define a rule structure

(defstruct rule

name

condition

conclusion)

;; Create the phenyl group rule

(setf phenyl-rule

(make-rule

:name "phenyl-group-rule"

:condition #'(lambda (spectrum)

(has-phenyl-group spectrum))

:conclusion "The molecule likely contains a phenyl group (C6H5)."))

;; Example inference engine

(defun apply-rules (spectrum rules)

(mapcan #'(lambda (rule)

(when (funcall (rule-condition rule) spectrum)

(list (rule-conclusion rule))))

rules))

;; Example usage

(setf rules (list phenyl-rule))

(apply-rules my-spectrum rules)Because Lisp has real-time compilation, it allows scientists to interact with the code, very much like we do in our python shell if we want to test a hypothesis:

;; Hypothetical: Generate candidate structures and test them

(defun generate-candidates (spectrum)

"Generate plausible molecular structures based on the spectrum."

;; This would use a library of fragmentation rules and heuristics

'(structure1 structure2 structure3))

(defun test-candidate (candidate spectrum)

"Test if a candidate structure matches the observed spectrum."

;; Compare predicted vs. observed spectrum

)

(defun interpret-spectrum (spectrum)

"Generate and test candidate structures for the spectrum."

(let ((candidates (generate-candidates spectrum)))

(remove-if-not #'(lambda (candidate)

(test-candidate candidate spectrum))

candidates)))If we were to implement our blanket tagging example in Lisp, it might look like this:

;; Define a rule for winter blankets

(setf winter-rule

(make-rule

:name "winter-blanket-rule"

:condition #'(lambda (tags)

(and (member 'wool tags)

(member 'handmade tags)))

:conclusion "This blanket is likely suitable for winter use."))

;; Example tags

(setf blanket-tags '(wool handmade red crochet))

;; Apply the rule

(apply-rules blanket-tags (list winter-rule))DENDRAL’s rules were getting complexer at every turn, and were useless for anything outside mass spectrometry and organic chemistry.

This form of AI involved the upfront effort of humans in order to tag and categorise the output of systems such as DENDRAL. They were also very brittle, in that any erroneous input would result in a cascading set of wrong answers.

For DENDRAL, organic chemists spent years working with AI researchers to encode their knowledge into rules, identifying key mass spectrometry fragmentation patterns, defining heuristics for inferring molecular structures, validating and testing. Very much like any profession out there, much of this knowledge was locked up inside experts heads, often intuitive, unspoken.

As the rule base grew, the system became harder to maintain. DENDRAL’s rule base eventually became so large that adding a new rule required checking for interactions with thousands of existing rules. Which was both time-consuming and error-prone.

On top of all that, doctors and chemists, the users, needed extensive training to understand how to interact with the system, interpret its outputs, and provide feedback. This added another layer of human effort.

By the 1980s, it became clear that hand-coding rules was unsustainable, and that systems were too brittle for use in the real world, where data is often messy and inconsistent.

Funding for AI cratered. Leading to what is called the AI Winter.

It was only in the later nineties and the 2000s that interest in AI revived with a shift towards statistical methods such as neural networks and machine learning. Still using tagging, but didn’t require humans to do the tagging themselves.

How does tagging help with training AI?

Instead of hand-coding rules, researchers focused on training models to learn patterns from data. This was made possible by advances in machine learning, in particular human brain inspired neural networks. A neural network could learn to recognise cats in images by analysing pixels, without humans having to define what a "cat" looks like.

Automating tagging by involving humans less and less

Humans provide a small set of labeled examples, like 1,000 blankets tagged with "wool," "cotton," "crochet”.

A neural network trains on this data to predict tags for new, unlabeled items.

The model generalises from the examples, reducing the need for manual tagging.

The next step might be to allow AI to discover tags by itself.

Algorithms like k-means clustering or topic modelling group similar items based on their features, effectively creating tags automatically.

Example: If you have a dataset of blankets with features like colour, texture, and material, a clustering algorithm might discover groups like "winter wool blankets" or "lightweight cotton throws" without pre-defined tags.

In Pinterest Visual Search, users can upload an image of a blanket, and Pinterest’s deep learning model automatically tags it and finds similar items.

In our application, we use the same methods on a smaller scale

We use tagging in our application in order to attach meaningful, structured data to an entity. In the example, it’s colour, material, technique, origin. You could call them features: discrete, descriptive pieces of information that define the characteristics of an entity.

In AI, neural networks represent data as feature vectors, which are numbers representing attributes. For example, an image of a blanket might be represented by vectors encoding colour, texture, pattern, etc.

For AI, we’d be encoding colour a bit like this:

Attribute: dominant colour

Representation: 4 colours × 3 RGB values each

Example Values: [255, 0, 0, 0, 0, 255, 144, 238, 144, 0, 100, 0] (for red, blue, light green, dark green)And material like this:

Attribute: Material

Representation: Proportions: [Wool, Synthetic, Cotton, Other]

Example Values: [0.6, 0.4, 0.0, 0.0]The resulting vector representation might look a little like this (including the other attributes we mentioned)

[255, 0, 0, 0, 0, 255, 144, 238, 144, 0, 100, 0, 0.6, 0.4, 0.0, 0.0, 1, 0, 0, 0, 0, 0, 1, 0]As you can see, this is much easier to ingest. It allows computers to quickly compare thousands of items using simple math.

In fact, our simple hierarchical tag also uses numerical representation. Humans get a user interface that tells them which attribute they are choosing, but what’s saved in the database is just a number.

So one of our hierarchical tags could be represented in a similar, but much simpler way:

[1,5,7,4,3,7,3]Which means this, again excluding some:

Industry: Software Development

Job Category: Engineering

Location: Remote In using Django-Taggit to create hierarchal tags, we weren’t ahead of our time, but smart enough to recognise what AI has given our community.

A decade ago, when we built our job platform with Django-Taggit, we weren’t inventing the future. We were recognising a principle we were taught by Aristotle and Kant: that meaning emerges from structure.

Our hierarchical tags, whether for blankets, jobs, or products, were a manual implementation of what AI now does at scale: organising the world into features that can be compared, searched, and reasoned about.

We didn’t need neural networks to understand that tagging is the skeleton of knowledge. When a modern AI model learns to classify an image or recommend a product, it’s doing what we did by hand. Extracting attributes (color, material, technique) and using them to make connections. The difference being that we encoded those attributes as explicit tags; AI infers them from data.

Without structure, there’s no understanding.

Our system wasn’t ahead of its time because the need for structure is eternal. What changed is the tooling. AI didn’t invent the concept of features, it just automated their discovery.

We build the scaffolding; AI fills in the gaps. And in doing so, it validates what we already knew: that the power of any system, be it human or machine, lies in its ability to categorise, compare, and connect.

Today, we could let AI suggest tags, predict missing attributes, or even infer new hierarchies from our data. But the core insight remains ours: tags aren’t just labels. They’re the language of comparison.

So, I wouldn’t say that the work we did so long ago foreshadowed AI. Tagging existed long before that, and learning from AI Labs had been percolating into regular software development for decades. We’re just lucky to have talent that picked up on the research and realised it in a working software solution.

Which is still working today. In new jackets that we are launching one by one in the coming weeks.