Why the bottleneck is no longer dev time but PM time.

It takes so much longer to review the ticket than for the AI coding agent to do the ticket, it’s crazy.

We’re a small software company based in Amsterdam. We use AI coding agents a lot, but we’re only now learning how to use them in a team. Use them to do tickets.

That we’re now using coding agents is actually among the last steps we’re taking in integrating AI into every facet of our business. Long before they were good enough for production work, we’d been using AI to create tickets

So now we’ve got meticulously detailed and correctly structured tickets, we’re building a system where an AI coding agent can do tickets autonomously. The instruction is, for example, “do all tickets in project x, assigned to you, marked high”.

The autonomous coding agents works much faster but leaves the human in the loop struggling to understand what’s been done and why.

I say “autonomously” but we aren’t quite there yet. A developer still guides the coding agent, the level of guidance dependent on how much free rein they are willing to give for a certain problem.

Give the agent all freedom to do the task means that the human is no longer in the loop during the work. The agent reads the ticket, creates the solution, creates a fix or feature branch in git, commits and pushes, adds a comment to the ticket, and sets the ticket to the appropriate state.

But here’s the thing, at some point the work needs to be reviewed. And of course some tasks have more visible results than others. “Change the background colour to blue” isn’t too difficult.

Others however, require deep knowledge of the underlying logic. When clients request changes in our reporting applications, figuring out which variables should be changed is quite challenging. The AI agent has already done the change, has documented it, we seem to see a change, but is it the correct one?

Coding is done in a flash but you might spend hours trying to get your head around it.

The challenge is not how to do a project, not how to do tickets, but how to keep a trail of consciousness.

I was just reading about Codex, the new offering from OpenAI. It looks to be a very capable orchestrating system, enabling developers to manage multiple coding agents to work on a project.

But just like it’s much simpler to have a human developer build a website from scratch than to handle suggestions brought in by humans, it’s much simpler to build a big project using multiple agents than to start thinking about if this or that feature is useful in the real world. Maybe that’s why OpenAI’s Codex includes a game as example: if there’s anything more removed from the world it’s a video game.

When a human developer handles a ticket, understanding the code is a large part of the work. It’s an experience often shared during review: “I spent x hours searching and thinking, in the end the fix was done in 5 minutes”.

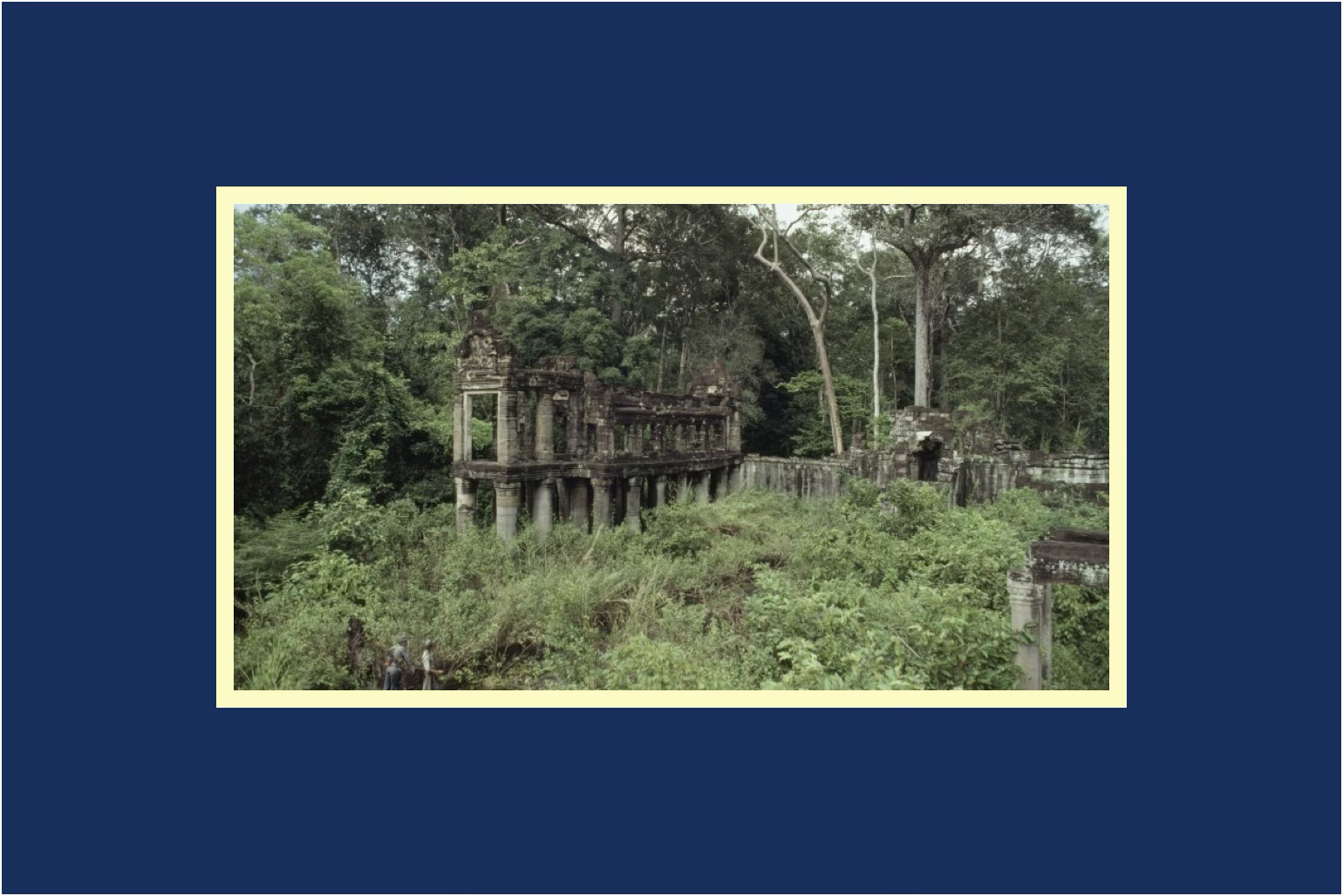

Working through a problem leaves a trail of consciousness, while we work we create a picture of the landscape of the project, we’re wielding our machete, cutting ourselves a path through the impregnable jungle, and we can tell that story to another human: “I survived the jungle and uncovered the riches of the lost kingdom hidden within”.

Yes, you’ll instruct your coding agent to explain its work in comments to the ticket but there’s no-one home to understand how the detail fits to the project, the project to the business, the business to the world, quite like a human would.

An agent’s context window is far too small to encompass real-life human endeavours.

Our agents can execute tasks rapidly, but the human review process, especially for complex logic, now dominates the timeline. The agent’s “trail of consciousness” is limited by its context window and lack of holistic project/business awareness.

Reviewers must reverse-engineer the agent’s decisions, especially for non-trivial changes. This is exacerbated when the agent operates autonomously, leaving humans to play catch-up.

For a human developer, the process of struggling through a problem builds a mental model of the system, which is shared during reviews. This narrative is missing when an agent “just does the work.”

Potential solutions mark what’s missing: trust

We can imagine solutions, such as instructing the agent to give a high level narrative explaining the solution in the context of the project. Or a “developer diary” section in the ticket explaining reasoning. Although, anyone who has seen reasoning printed out in console in real time knows that it’s going to be LOT of text.

One of the things we’re trying is to get the agent to build a high level diagram of the project and how it fits into the real world. The idea being that ingesting a picture is faster than piecing together reality from reams of text. For me at least.

What’s missing is trust through clarity and context. If we have the agent generate or update a high-level architecture diagram for each project, not only the application and its technical context, but also its business context, human reviewers will be able to see quickly what changes entail and how they help the business succeed.

Embed these diagrams directly in Jira tickets or Confluence pages, and reviewers can see the visual changes alongside the code changes. Even better: an animation showing before/after.

This aligns with our goal of balancing automation with human oversight. Diagrams act as a "translation layer" between the agent’s precision and human intuition.

If we want to work well with AI coding agents, we’re going to have to get creative.

We want AI coding agents to not only do new projects. In some respects, new projects are easy. But we also want to automate menial tasks like ticket management: reading, prioritising, fixing, and deploying fixes. But keeping humans in the loop for oversight. We value the speed of AI but we want to keep humans in the loop.

In the end, we know that AI coding agents are here to stay. And are only going to get better. We’re going to have to get creative working with them.