Why You Should Really, Really, Really, Run Your AI Models Locally.

As a small company, you can’t be dependent on the whims of Big Tech AI.

After escaping Big SaaS we’re now falling for the product of Big Tech AI.

As our senior engineer says, we’ve become addicted to tokens. And Big Tech AI is the pusher.

But it doesn’t have to be that way. If it looks like the addiction will stay, we must at least grow our own supply.

—————-

We now have a pretty good idea on how to integrate Agentic AI into our workflow. Now we must own the infrastructure.

—————-

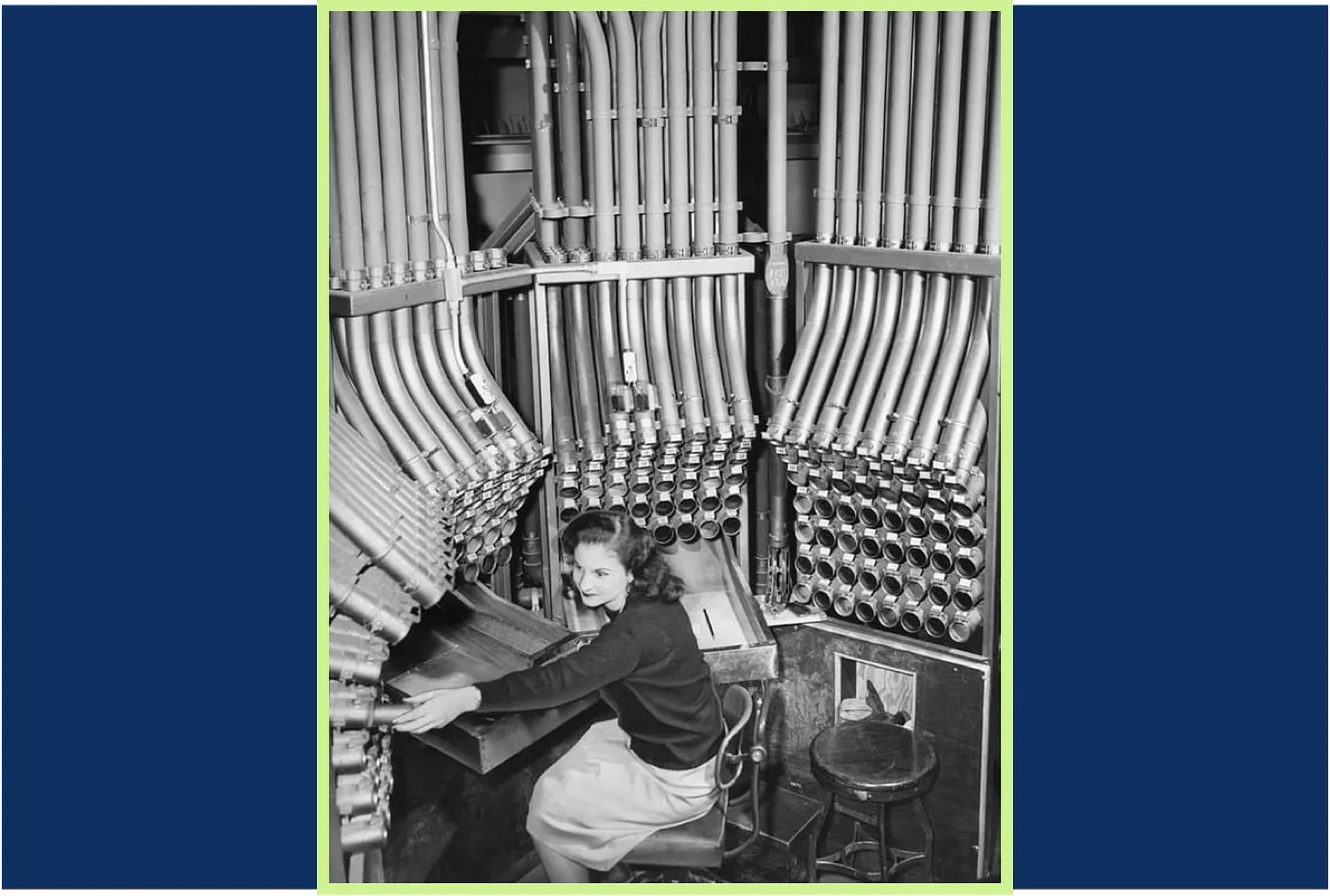

You might have seen them featured in old movies. The system of air-pressured pipes with which department store personnel could share messages and transport cash. Like a proto-internet.

But here’s the thing. We don’t own the pipes. Our landlord does. Worse, he demands a big cut out of every sale.

The way out is to build our own.

—————-

We’re not going back to pneumatic pipes. But we may end up looking more like a 90s company with a server room.

—————-

Building your own infrastructure in 2026 is not as frightening as it seems. And no, we’re not REALLY going to build a server room.

We are going to have to consider hardware though. Is it going to be 50 Mac minis in co-location? Nvidia Jetsons? Some combination? Or just giving everyone a super high end MacBook Pro?

What we do know is that we’re going to need specs that can run models like Mistral’s Codestral or Devstral. Or Kimi2. Other sparse models.

But that’s just the start of it. We’ll also have to build a UI that can deliver a coding companion rather than a software development tool.

—————-

This is what recruiters will assess in the near future: the software engineer and their personal infrastructure.

—————-

Gone are the days when software engineers were faced with ridiculously fabricated programming problems.

Now, engineers take along their own rig.

They have their very personal set of helpers built into their persona. They’ll list their infrastructure as part of their CV.

—————-

Our inference costs easily surpass a thousand euros a month. Per dev. Something’s gotta give.

—————-

It’s clear that we can’t keep on like this. I use Warp’s Max tier with 18,000 tokens included. With me running three agents simultaneously and a fourth for creating tickets, I’ll be burning through 50,000 tokens this month.

We could just as well hire a junior.

Solution? I’ve already started building my own coding companion on top of Mistral Vibe CLI. Backed by Devstral 2. An arduous task — Claude Opus says it’s more than a million traditional developer hours.

But I’m in luck. Warp just went open source. Using it as interface shrivels down effort considerably.

And of course I’m building it with the help of the very coding agents I wish to replace.

Plus: I‘m just building what we need. I don’t have to build it for the whole developer community.

Just for us.